Abstract

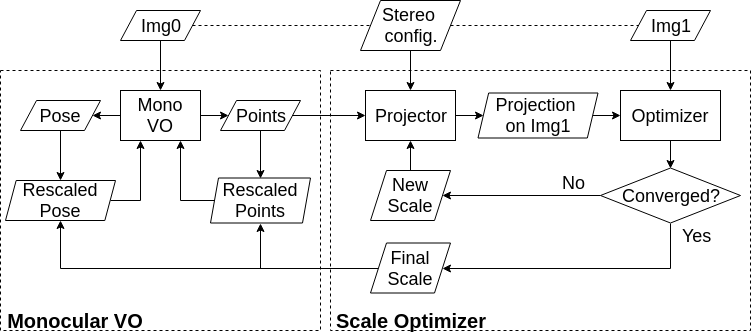

This paper proposes a novel approach for extending monocular visual odometry to a stereo camera system. The proposed method uses an additional camera to accurately estimate and optimize the scale of the monocular visual odometry, rather than triangulating 3D points from stereo matching. Specifically, the 3D points generated by the monocular visual odometry are projected onto the other camera of the stereo pair, and the scale is recovered and optimized by directly minimizing the photometric error. It is computationally efficient, adding minimal overhead to the stereo vision system compared to straightforward stereo matching, and is robust to repetitive texture. Additionally, direct scale optimization enables stereo visual odometry to be purely based on the direct method. Extensive evaluation on public datasets (e.g., KITTI), and outdoor environments (both terrestrial and underwater) demonstrates the accuracy and efficiency of a stereo visual odometry approach extended by scale optimization, and its robustness in environments with challenging textures.

Methodology

Experimental Evaluations

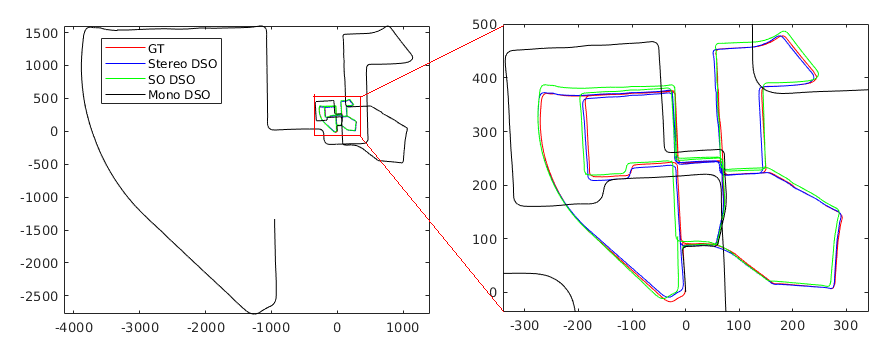

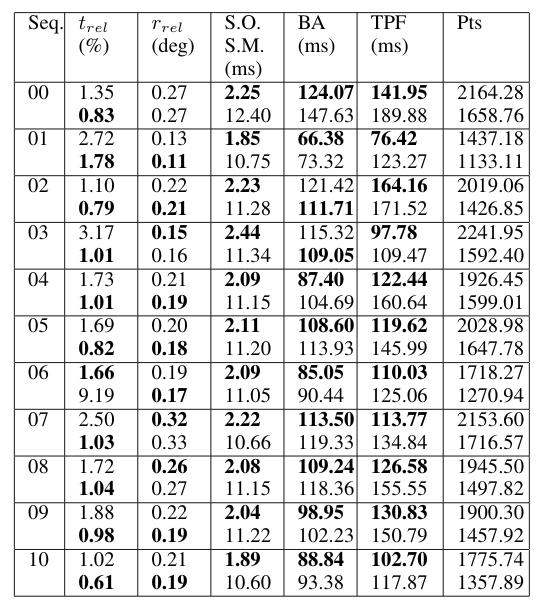

KITTI

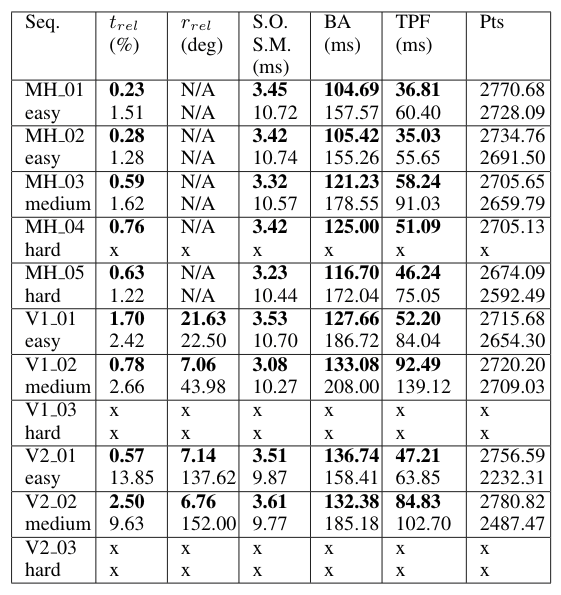

EuRoC

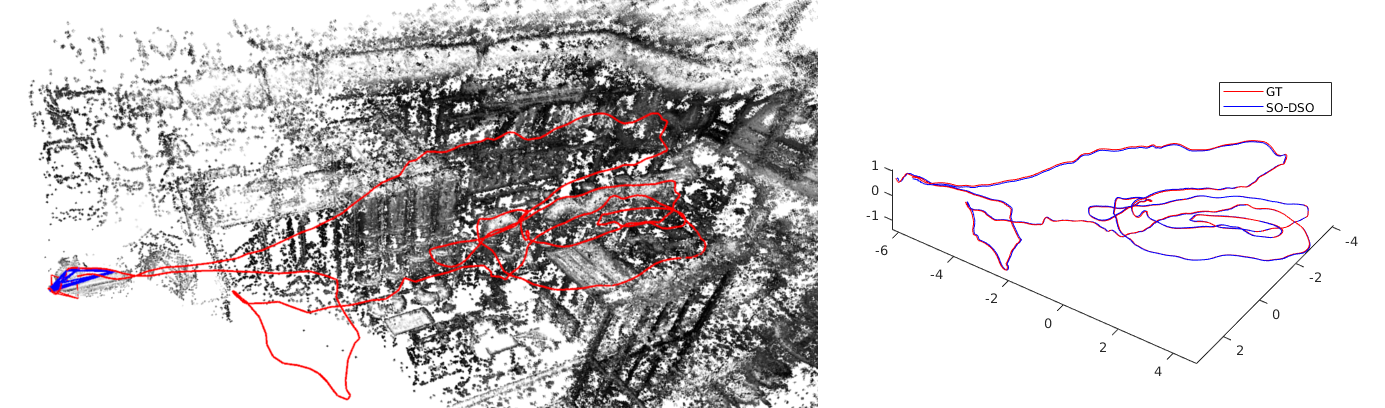

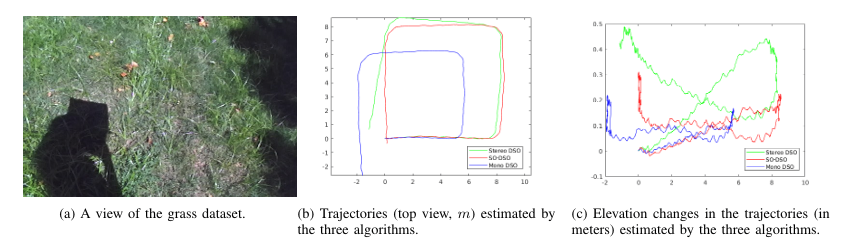

ZED camera dataset

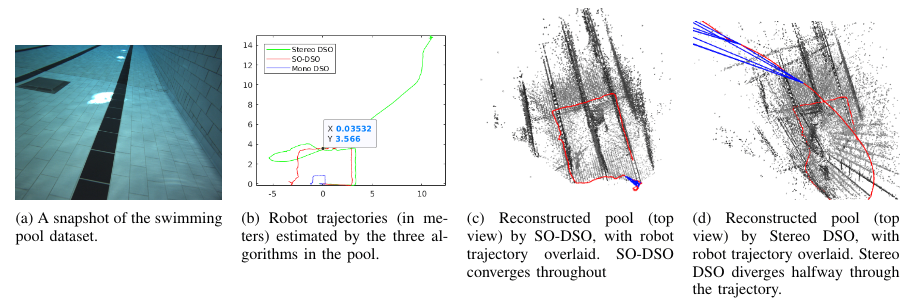

Pool dataset

Links

Paper(IROS19)