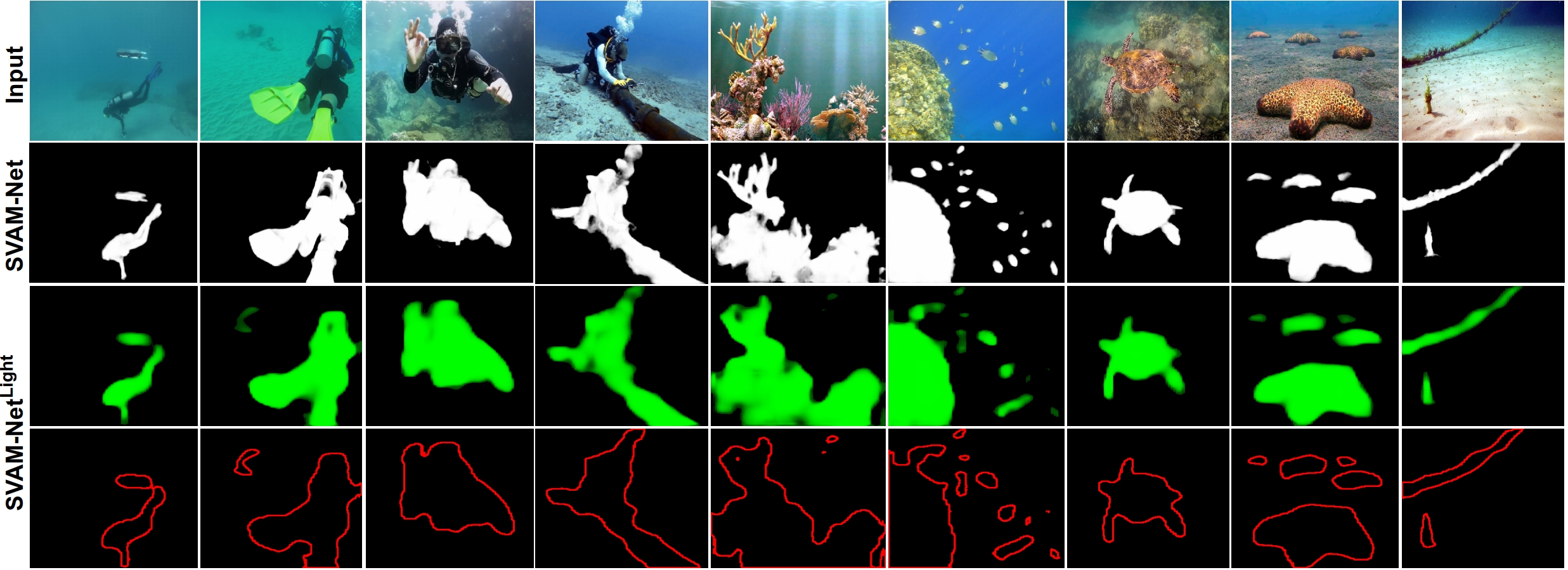

'Where to look' - is a challenging and open problem in underwater robot vision. An essential capability of visually-guided AUVs is to identify interesting and salient objects in the scene to accurately make important operational decisions. In this project, we present a holistic approach to saliency-guided visual attention modeling (SVAM) for use by autonomous underwater robots. Our proposed model, named SVAM-Net, integrates deep visual features at various scales and semantics for effective salient object detection (SOD) in natural underwater images. The SVAM-Net architecture is configured in a unique way to jointly accommodate bottom-up and top-down learning within two separate branches of the network while sharing the same encoding layers. We design dedicated spatial attention modules (SAMs) along these learning pathways to exploit the coarse-level and top-level semantic features for SOD at four stages of abstractions. In particular, the bottom-up pipeline extracts semantically rich hierarchical features from early encoding layers, which facilitates an abstract yet accurate saliency prediction at a fast rate; we denote this decoupled bottom-up pipeline as SVAM-NetLight . On the other hand, we design a residual refinement module (RRM) that ensures fine-grained saliency estimation through the deeper top-down pipeline. Detailed demonstrations and results can be found in the paper.

In the implementation, we incorporate comprehensive end-to-end supervision of SVAM-Net by large-scale diverse training data consisting of both terrestrial and underwater imagery. Subsequently, we validate the effectiveness of its learning components and various loss functions by extensive ablation experiments. In addition to using existing datasets, we release a new challenging test set named USOD for the benchmark evaluation of SVAM-Net and other underwater SOD models. By a series of qualitative and quantitative analyses, we show that SVAM-Net provides SOTA performance for SOD on underwater imagery, exhibits significantly better generalization performance on challenging test cases than existing solutions, and achieves fast end-to-end inference on single-board devices. Moreover, we demonstrate that a delicate balance between robust performance and computational efficiency makes SVAM-NetLight suitable for real-time use by visually-guided underwater robots.

Important Pointers:

|